Adaptive Testing: How AI Personalizes Learning Through Smarter Assessment

Adaptive Testing: How AI Personalizes Learning for Every Student

Adaptive testing is an assessment method that adjusts the difficulty and selection of questions in real time based on a student's responses, using algorithms and artificial intelligence to tailor each test to the individual learner. Instead of giving every student the same fixed set of questions, an adaptive test starts with a question of medium difficulty and then branches: a correct answer triggers a harder question, while an incorrect answer triggers an easier one, rapidly honing in on the student's true ability level.

The result is a test that is simultaneously shorter, more accurate, and less stressful than traditional fixed-form assessments. A high-achieving student is not bored by questions far below their level. A struggling student is not demoralized by questions far above it. Both receive a precise measure of their knowledge in less time — and the data generated reveals not just what they scored, but exactly which concepts they have mastered and which they have not.

Adaptive testing is not new — the concept dates to the 1970s — but advances in artificial intelligence, machine learning, and knowledge graph technology are transforming it from a standardized testing technique into a powerful personalized learning AI tool that tutoring centers, schools, and EdTech platforms can use to drive better outcomes for every student.

The Evolution of Adaptive Testing

Early Adaptive Testing: Paper-Based Roots

The idea behind adaptive testing predates computers entirely. Psychometricians in the early 20th century recognized that fixed tests were inefficient: most questions on any given test are either too easy or too hard for any given student, providing little useful information. Alfred Binet's original intelligence tests (1905) used a form of adaptive administration, with examiners adjusting which questions to ask based on previous responses.

Computer Adaptive Testing (CAT)

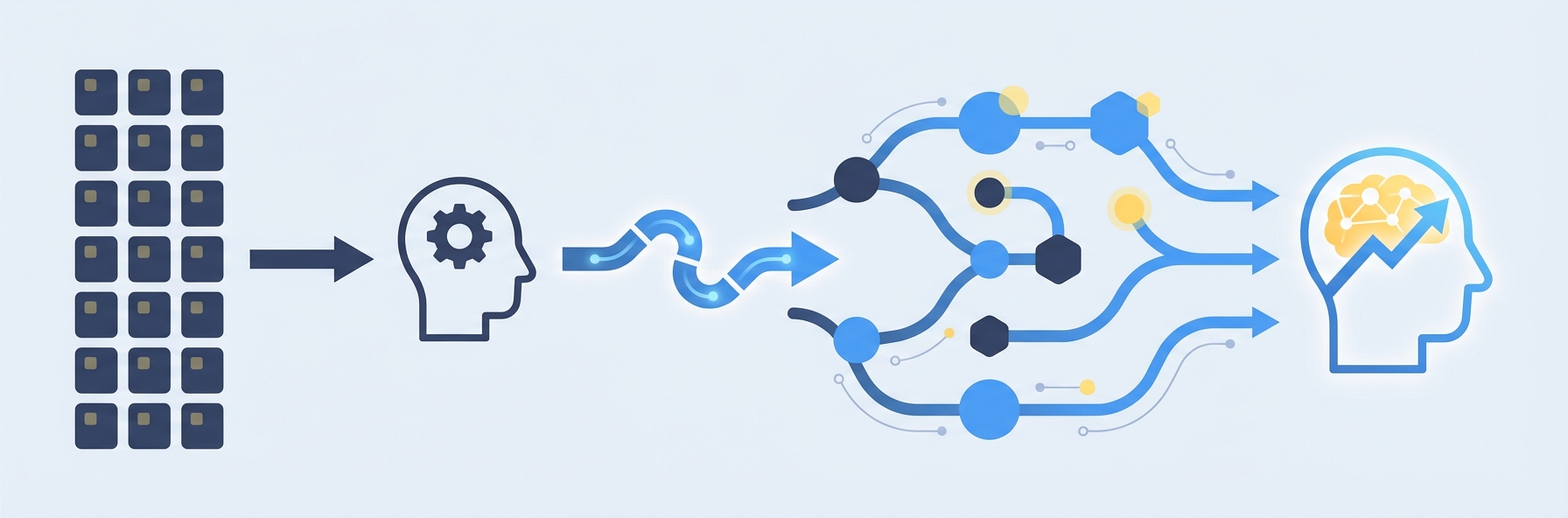

The first computer adaptive testing (CAT) systems emerged in the 1970s and 1980s, enabled by Item Response Theory (IRT) — a statistical framework that models the relationship between a student's ability level and their probability of answering each question correctly. IRT assigns each question parameters for difficulty, discrimination (how well it differentiates between ability levels), and guessing probability.

In a CAT system, the computer selects each question to maximize information gain about the student's ability, based on their responses to all previous questions. After enough questions — typically 20 to 40 percent fewer than a fixed test — the system arrives at an ability estimate with acceptable precision.

The most prominent example is the GRE (Graduate Record Examination), which adopted computer adaptive testing in 1993. The GMAT, NCLEX nursing exam, and numerous state K-12 assessments followed. These implementations proved that adaptive testing could deliver the same or better measurement accuracy with significantly fewer questions.

AI-Powered Adaptive Testing: The Current Frontier

Modern adaptive testing goes well beyond classical CAT. Instead of modeling student ability as a single number on a unidimensional scale, AI-powered systems model student knowledge as a complex, interconnected graph of concepts and skills. Personalized learning AI algorithms use this knowledge representation to make far more nuanced decisions about which questions to ask and what to teach next.

Key advances driving this evolution include:

- Knowledge graphs that model relationships between concepts (e.g., understanding fractions is prerequisite to understanding ratios, which is prerequisite to understanding proportional reasoning).

- Deep learning models that predict student performance based on patterns across millions of learning interactions.

- Reinforcement learning algorithms that optimize question selection not just for measurement accuracy but for learning outcomes — choosing questions that will teach as well as test.

- Multi-dimensional ability models that track mastery across dozens or hundreds of sub-skills simultaneously, rather than reducing a student to a single score.

How Computer Adaptive Testing Works: The Technical Foundation

Understanding the mechanics of adaptive testing helps explain why it outperforms traditional assessments.

Item Response Theory (IRT)

IRT is the mathematical backbone of most adaptive testing systems. The core idea is elegant: every question has measurable characteristics (difficulty, discrimination, guessing factor), and every student has a latent ability level. The probability that a specific student will answer a specific question correctly is a function of both.

The most common IRT model — the three-parameter logistic model — calculates:

P(correct) = c + (1 - c) / (1 + e^(-a(ability - b)))

Where:

- b = question difficulty

- a = question discrimination (how sharply it separates high-ability from low-ability students)

- c = guessing parameter (probability of a correct answer by chance)

The Adaptive Algorithm

A typical adaptive testing session proceeds as follows:

Initialization: The system starts with a prior estimate of the student's ability — either the population average or an estimate based on previous data.

Question Selection: The algorithm selects the question from the item bank that will provide the most information about the student's ability at the current estimate. This is typically the question whose difficulty most closely matches the current ability estimate.

Response Evaluation: The student answers, and the system updates the ability estimate using Bayesian or maximum likelihood methods, incorporating all responses so far.

Iteration: Steps 2-3 repeat until a stopping criterion is met — typically when the confidence interval around the ability estimate falls below a threshold, or when a maximum number of questions has been reached.

Score Reporting: The final ability estimate is reported, often along with confidence intervals and diagnostic information about strengths and weaknesses.

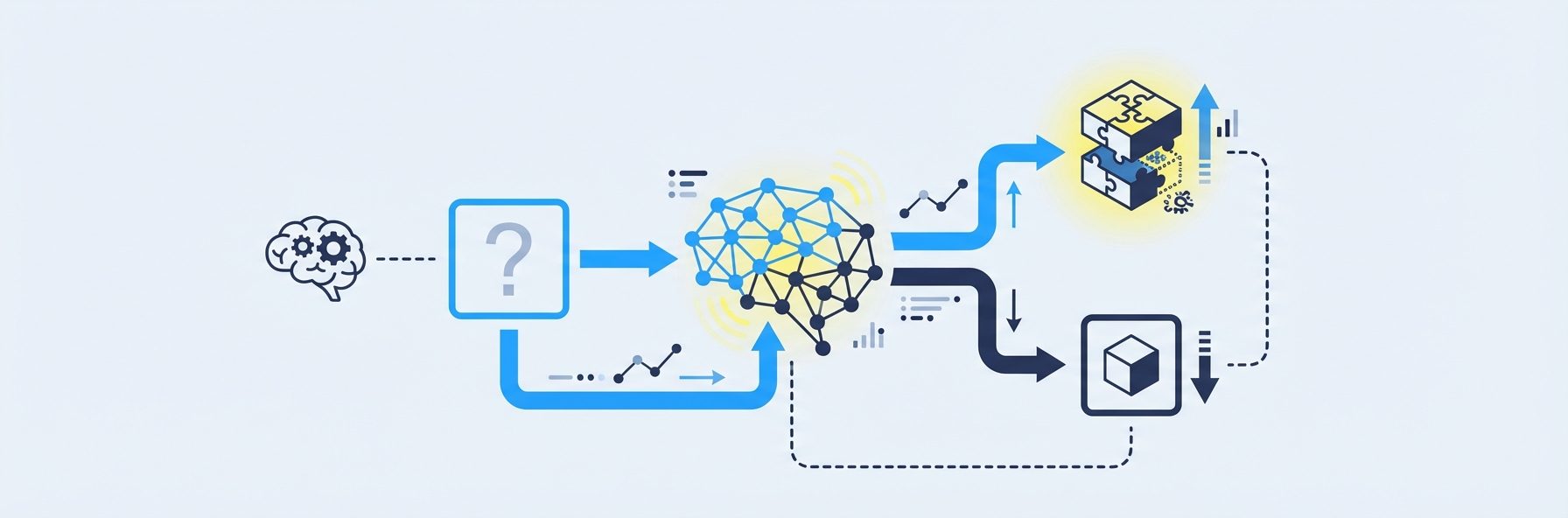

Knowledge Graphs: The Next Level

Classical CAT treats student ability as a single dimension. Modern personalized learning AI systems instead model knowledge as a graph — a network of nodes (concepts and skills) connected by edges (prerequisite and related-concept relationships).

In a knowledge graph for middle school mathematics, for example:

- "Adding fractions" depends on "Understanding fractions" and "Finding common denominators"

- "Solving linear equations" depends on "Order of operations," "Inverse operations," and "Variable manipulation"

- "Proportional reasoning" depends on "Ratios," "Fractions," and "Multiplication"

When adaptive testing uses a knowledge graph, the algorithm does not just determine whether a student is "good at math" or "bad at math." It pinpoints exactly which concepts are mastered, which are partially understood, and which have not been learned at all — and it identifies the most productive next concepts to learn based on the prerequisite structure.

This is the approach IntelGrader is developing for tutoring centers: adaptive testing powered by a knowledge graph that maps the relationships between mathematical concepts, enabling precise diagnostic assessment that feeds directly into personalized learning recommendations.

Benefits of Adaptive Testing for Students

More Accurate Measurement

Fixed tests waste most of their questions. Easy questions tell you nothing about a high-achieving student. Hard questions tell you nothing about a struggling student. Adaptive testing eliminates this waste by selecting questions targeted to the boundary of each student's knowledge. Research by Weiss and Kingsbury (1984) demonstrated that adaptive tests can achieve the same measurement precision as fixed tests with 40 to 60 percent fewer questions.

Reduced Test Anxiety

Students report lower anxiety on adaptive tests because they are less likely to encounter long stretches of questions that are frustratingly difficult or boringly easy. The experience feels more like a conversation than an interrogation — each question is appropriately challenging, maintaining engagement without causing distress.

A 2022 study published in Educational Psychology Review found that students taking computer adaptive tests reported significantly lower anxiety levels than those taking equivalent fixed-form tests, with no reduction in measurement accuracy.

Faster Assessments

Fewer questions means less time testing and more time learning. A traditional 60-question diagnostic math assessment might take 45 minutes. An adaptive version covering the same content domain can achieve comparable diagnostic precision in 20 to 25 minutes. For tutoring centers running tight schedules, this efficiency gain is substantial.

Immediate, Precise Diagnostics

Unlike a traditional test that returns a single score, adaptive testing produces a detailed knowledge profile. A student does not just receive "72 percent in math." They receive a map showing mastery of addition and multiplication, partial understanding of fractions, and a gap in understanding of ratios. This precision makes the assessment directly actionable — the tutor knows exactly what to teach next.

Personalized Learning Paths

When adaptive testing is combined with knowledge graph technology, the diagnostic results naturally generate personalized learning paths. The system identifies the most productive next concepts to study — those that the student is ready to learn (prerequisites are met) but has not yet mastered. This transforms assessment from a periodic checkpoint into a continuous engine for personalized learning.

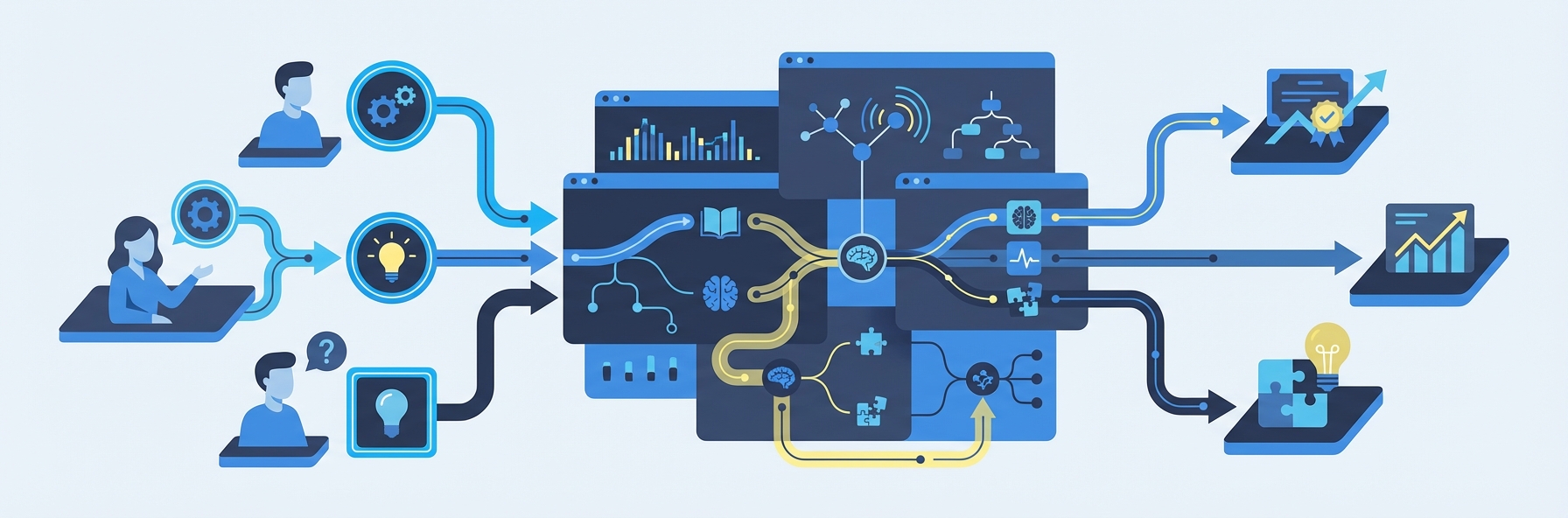

Benefits of Adaptive Testing for Educators

Diagnostic Precision Without Extra Work

Traditionally, building a diagnostic assessment that covers a wide range of difficulty levels and pinpoints specific knowledge gaps requires significant expertise in test construction. Adaptive testing automates this process. The educator does not need to create differentiated assessments for different ability levels — the system handles differentiation dynamically.

Time Savings

Shorter assessments mean less class time consumed by testing. AI-powered scoring means no manual grading. Automated diagnostic reports mean no manual data analysis. For tutoring center operators, adaptive testing can replace the informal diagnostic process — where a tutor spends the first several sessions figuring out what a student knows — with a precise assessment completed in a single sitting.

Data-Driven Instruction

The detailed knowledge profiles generated by adaptive testing give educators actionable data. A tutor working with smart grading and adaptive testing data can see exactly where each student's knowledge breaks down and tailor instruction accordingly. At the group level, the data reveals which concepts are commonly misunderstood across the class, informing lesson planning and curriculum adjustment.

Progress Monitoring Over Time

When students take adaptive assessments periodically, their knowledge profiles can be compared over time, revealing growth trajectories and identifying concepts where progress has stalled. This longitudinal view is far more informative than comparing scores on different fixed tests, which may vary in difficulty.

Current Adaptive Testing Tools and Platforms

ALEKS (Assessment and Learning in Knowledge Spaces)

ALEKS, developed at the University of California, Irvine, and now owned by McGraw-Hill, is one of the oldest and most researched adaptive learning platforms. It uses knowledge space theory — closely related to the knowledge graph approach — to model what a student knows and does not know across a subject domain. ALEKS periodically reassesses students to update their knowledge profiles, and its learning mode selects problems at the boundary of the student's knowledge.

ALEKS covers mathematics from basic arithmetic through calculus, as well as chemistry, statistics, and business courses. Its research base is extensive, with multiple studies demonstrating significant learning gains.

MAP Growth (NWEA)

MAP Growth is a computer adaptive test widely used for K-12 benchmark assessment in the United States. It measures student achievement and growth in reading, language use, mathematics, and science. MAP Growth uses IRT-based adaptive algorithms to select questions and produces RIT scores that allow longitudinal progress tracking.

Over 10 million students take MAP Growth assessments annually, making it one of the most widely used adaptive testing platforms in K-12 education.

DreamBox

DreamBox uses adaptive technology specifically for K-8 mathematics. What distinguishes DreamBox from simpler adaptive systems is its analysis of how students solve problems — not just whether they get the right answer. The platform monitors response strategies, time patterns, and hint usage to build a nuanced model of each student's understanding.

i-Ready (Curriculum Associates)

i-Ready combines adaptive diagnostic assessment with personalized instruction for K-12 reading and mathematics. The diagnostic assessment adapts in real time to identify each student's specific needs, and the instructional platform delivers targeted lessons based on the diagnostic results.

IntelGrader: Adaptive Testing for Tutoring Centers

IntelGrader is developing adaptive testing capabilities specifically designed for the tutoring center environment. Building on its core strength in AI-powered grading of handwritten math worksheets, IntelGrader's adaptive testing feature will use knowledge graph technology to map the relationships between mathematical concepts and precisely diagnose each student's mastery profile.

The vision is a system where every graded worksheet contributes data to the student's knowledge graph. Over time, the platform builds an increasingly precise picture of what each student knows and does not know — and uses that picture to recommend targeted practice, flag concepts that need reteaching, and generate adaptive assessments that efficiently measure progress. For tutoring centers that already use IntelGrader for AI grading, adaptive testing is a natural extension that makes the grading data directly actionable.

Implementing Adaptive Testing in Tutoring Centers

Tutoring centers are uniquely well-positioned to benefit from adaptive testing, for several reasons.

Why Tutoring Centers Are Ideal

- Small group sizes make it practical to individualize based on adaptive assessment results.

- Flexible curricula allow tutors to adjust instruction based on diagnostic data, unlike schools bound to rigid pacing guides.

- Parent expectations for visible progress create demand for the precise measurement adaptive testing provides.

- Competitive differentiation — tutoring centers that offer AI-powered adaptive assessment stand out from competitors using generic worksheets.

- Business model alignment — more precise diagnostics lead to more effective tutoring, which leads to better outcomes, which leads to retention and referrals.

Implementation Steps

1. Choose the right tool. For math-focused tutoring, look for platforms with strong knowledge graph models and alignment to your curriculum. IntelGrader's upcoming adaptive testing is designed specifically for this use case.

2. Integrate with existing workflows. Adaptive testing should complement, not replace, your current assessment practices. Use adaptive tests for initial diagnostics and periodic progress checks, while continuing to use regular practice worksheets (graded by AI) for ongoing skill building.

3. Train your tutors. Adaptive assessment data is only valuable if tutors know how to interpret and act on it. Invest in training that shows tutors how to read knowledge profiles, identify priority concepts, and adjust instruction accordingly.

4. Communicate with parents. Adaptive testing generates the kind of detailed, data-driven progress information that parents love. Use knowledge profiles and growth trajectories in parent conferences and progress reports to demonstrate the value of your program.

5. Iterate. Use the data from adaptive assessments to continuously refine your curriculum and teaching approaches. Which concepts do students consistently struggle with? Where do knowledge gaps cluster? This information is gold for curriculum development.

Challenges and Limitations of Adaptive Testing

Content Development Costs

Adaptive testing requires large, well-calibrated item banks. Each question must be field-tested to establish its IRT parameters (difficulty, discrimination, guessing). Building a comprehensive item bank for a single subject at a single grade level can require thousands of questions and extensive statistical analysis. This is why most adaptive testing is delivered by large platforms with significant development resources.

Equitable Access

Adaptive testing requires technology: computers or tablets, reliable internet, and testing software. Students without access to these resources are disadvantaged. While the digital divide has narrowed considerably since 2020, it remains a concern, particularly in rural areas and developing countries.

Gaming and Test Security

Because adaptive tests select questions based on previous responses, there is a risk that sophisticated test-takers could game the system — for example, intentionally answering early questions incorrectly to receive easier questions and achieve a misleadingly high score on them. Modern adaptive algorithms include safeguards against this, but the risk is not entirely eliminated.

Measurement Limitations for Constructed Responses

Most adaptive testing systems work with selected-response (multiple-choice) or short-answer questions. Adapting tests with constructed responses — essays, multi-step mathematical proofs, extended explanations — is more challenging because these responses are harder to score automatically in real time. However, advances in AI grading are narrowing this gap: platforms like IntelGrader that can grade handwritten mathematical working in real time are creating the technical foundation for adaptive testing with constructed responses.

Psychological Considerations

Some students are motivated by the experience of answering questions correctly, and the constant challenge of adaptive testing — where roughly half the questions are expected to be answered incorrectly at the optimal difficulty level — can feel discouraging. Careful communication about how adaptive tests work and what scores mean is important to prevent misinterpretation.

What the Research Says

The evidence base for adaptive testing is substantial and largely positive.

Measurement efficiency: A meta-analysis by Segall (2005) found that computer adaptive tests achieve comparable measurement precision to fixed tests with approximately 50 percent fewer items, consistent with earlier findings by Weiss and Kingsbury.

Learning gains from adaptive instruction: A 2021 meta-analysis published in Review of Educational Research found that intelligent tutoring systems using adaptive techniques produced learning gains equivalent to approximately 0.4 standard deviations — comparable to moving from the 50th to the 66th percentile.

Student experience: Research by Wise and Kong (2005) found that students taking adaptive tests reported higher engagement and lower anxiety compared to fixed-form tests.

Diagnostic precision: Studies of ALEKS by Falmagne et al. (2013) demonstrated that knowledge space-based adaptive assessment could accurately identify student knowledge states with high precision, enabling targeted instruction that produced significant learning gains.

Growth measurement: NWEA's research on MAP Growth has shown that adaptive assessment provides more sensitive measurement of student growth than fixed-form tests, particularly for students at the extremes of the ability distribution.

The evidence supports the conclusion that adaptive testing is more efficient, more precise, and more informative than traditional fixed-form assessment — particularly when combined with personalized learning AI that uses diagnostic results to guide instruction.

The Future of Adaptive Testing

Continuous Assessment

The distinction between "testing" and "learning" is blurring. Future adaptive systems will assess students continuously through their daily practice activities — every problem solved, every question answered — rather than through periodic discrete tests. Each interaction updates the student's knowledge profile, and the system adjusts the next learning activity accordingly. IntelGrader's approach of building student knowledge profiles from ongoing graded worksheet data points in this direction.

Multimodal Assessment

Current adaptive tests are primarily text and image based. Future systems will incorporate voice interaction, interactive simulations, and even physical manipulation of virtual objects. A student might demonstrate understanding of physics through an interactive simulation or explore geography through an AI-generated virtual environment — all while the adaptive system evaluates their understanding in real time.

Cross-Platform Knowledge Profiles

Today, a student's adaptive assessment data is siloed within each platform. Future standards and interoperability frameworks will enable knowledge profiles that follow students across platforms and even across institutions, creating a comprehensive, portable record of what each student knows and what they are ready to learn next.

Integration with AI Grading

The combination of AI grading and adaptive testing is particularly powerful. When an AI system can grade handwritten work in real time and feed the results into an adaptive knowledge model, the distinction between practice and assessment disappears. Every worksheet becomes an adaptive assessment opportunity, and every assessment result informs the next practice session. This is the integrated vision that platforms like IntelGrader are working toward.

Frequently Asked Questions

What is adaptive testing?

Adaptive testing is an assessment method where the selection and difficulty of questions adjust in real time based on the student's responses. If a student answers correctly, the next question is harder; if they answer incorrectly, the next question is easier. This approach measures student ability more precisely and efficiently than traditional fixed tests, typically requiring 40 to 60 percent fewer questions to achieve the same measurement accuracy.

How does adaptive testing differ from traditional testing?

Traditional tests give every student the same questions in the same order, regardless of ability. Adaptive tests customize the question set for each student, selecting questions that are most informative given what the system already knows about the student's ability. This produces more precise measurements in less time, reduces test anxiety, and generates detailed diagnostic information about which specific concepts a student has or has not mastered.

What are the benefits of adaptive testing for tutoring centers?

Adaptive testing gives tutoring centers precise diagnostic data that replaces the informal "getting to know you" period with a structured assessment completed in a single session. It identifies exactly which concepts each student has mastered and which need work, enabling tutors to personalize instruction immediately. Over time, periodic adaptive assessments track growth with precision that impresses parents and demonstrates program effectiveness. Platforms like IntelGrader are developing adaptive testing specifically for the tutoring center workflow.

Is personalized learning AI effective?

Yes. The research evidence is strong. A meta-analysis in the Review of Educational Research found that personalized learning AI systems, including adaptive testing and intelligent tutoring, produce learning gains of approximately 0.4 standard deviations — a meaningful and educationally significant effect. The gains are largest when the technology is used to complement rather than replace human instruction, and when diagnostic data is used to inform teaching decisions.

What technology is needed to implement adaptive testing?

At minimum, adaptive testing requires internet-connected devices (computers or tablets) for students, adaptive testing software, and a calibrated item bank. For tutoring centers, cloud-based platforms eliminate the need for local server infrastructure. The most important non-technological requirement is tutor training: the diagnostic data from adaptive testing is only valuable if educators know how to interpret it and adjust their instruction accordingly. Book a demo with IntelGrader to explore adaptive testing options for your center.

Sources

Weiss, D. J., & Kingsbury, G. G. (1984). "Application of Computerized Adaptive Testing to Educational Problems." Journal of Educational Measurement, 21(4), 361-375. — Foundational research on adaptive testing efficiency.

Falmagne, J.-C., Albert, D., Doble, C., Eppstein, D., & Hu, X. (2013). Knowledge Spaces: Applications in Education. Springer. — Comprehensive reference on knowledge space theory and its application in adaptive learning systems including ALEKS.

Steenbergen-Hu, S., & Cooper, H. (2014). "A Meta-Analysis of the Effectiveness of Intelligent Tutoring Systems on College Students' Academic Learning." Journal of Educational Psychology, 106(2), 331-347. — Meta-analytic evidence on intelligent tutoring system effectiveness.

NWEA. (2024). MAP Growth Technical Report. — Technical documentation on the most widely used adaptive assessment in US K-12 education.

Hattie, J. (2023). Visible Learning: The Sequel. Routledge. — Updated meta-analyses including evidence on feedback timing and assessment for learning.

Learn more about AI-powered education tools on the IntelGrader blog or book a demo to explore how AI grading and adaptive assessment can transform your tutoring center.

Ready to transform your grading?

See how IntelGrader can save your tutoring centre 10+ hours per week with AI-powered grading.